Facebook’s Global Ad Machine Is The Company’s $80 Billion Annual Lifeblood. Workers Say It Puts Profits Over People.

Two years ago, a handful of Facebook employees began to raise internal alarms about a series of advertisements appearing in their news feeds. Purchased by a then up-and-coming lip-synching app called Musical.ly — now known as TikTok — the ads featured teenage girls provocatively gyrating to music in short video clips.

Curious as to why he and his colleagues were seeing ads ostensibly meant for young girls, one Facebook employee, who was also a father, dug into the company's advertising system at the time to determine what was going on. What he discovered wasn't an error, but Facebook's advertising system working as intended. The social network's algorithms had been optimizing the ads for the audience interacting with them the most: middle-aged men.

Initial complaints about the ads, which continued after Musical.ly was acquired and turned into TikTok, were rebuffed. TikTok, which reportedly spent $1 billion on advertising in 2018, was a valued business partner, one employee was told by higher-ups. Another person in a position to know told BuzzFeed News that a Facebook manager’s response to the concerns was to restrict access to data about the ads’ targeting.

The ads persisted for at least a year and a half.

“It’s so weird that I only hear my 8-year old nieces talk about tiktok, but then see these ads with voluptuous young ladies targeted to men over 35 years old,” one Facebook data scientist wrote on the company’s internal message board last year. “Are we indeed making sure Facebook is not creating a predator’s paradise?”

Facebook’s handling of TikTok’s ads is one of many examples of its advertising system run amok, and the company's ongoing prioritization of revenue over the safety of its 3 billion users, the public good, and the integrity of its own platform. The consequences vary: Consumers are sold goods they never receive or are lured into financial scams; legitimate advertisers’ accounts or pages are hacked and used to peddle those nonexistent goods or scams; credit card numbers are stolen. But the end result is often the same: Facebook banks ad revenue, while its users get ripped off.

A BuzzFeed News investigation has found that in relentlessly scaling its ad juggernaut — which is projected by analysts to bring in $80 billion this year — Facebook created a financial symbiosis with scammers, hackers, and disinformation peddlers who use its platforms to rip off and manipulate people around the world. The result is a global economy of dishonesty in which Facebook has at times prioritized revenue over the enforcement of policies seemingly put in place to protect the people who use its platform.

Company insiders said the ad platform’s issues are exacerbated by Facebook's continued reliance on a small army of low-paid, unempowered contractors to manage a daily onslaught of ad moderation and policy enforcement decisions that often have far-reaching consequences for its users. Internal documents and messages, as well as interviews with eight current and former employees and contractors, show that Facebook's ad workers have at times been told to ignore suspicious behavior unless it “would result in financial losses for Facebook,” and that the company is pushing to grow revenue in regions that flood its pages with scams.

Joe Osborne, a Facebook spokesperson, disputed the idea that the company profits from scam ads. The company invests heavily in keeping deceptive and low-quality ads off its platform, and took action against the problematic Musical.ly and TikTok ads, according to Osborne. In June 2019, roughly a year and a half after employees first complained, Facebook introduced a previously unreported policy that forbids ads that suggest the possibility of a sexualized interaction, he said. Facebook did not announce the policy or list it publicly — but that finally put an end to TikTok’s questionable ads.

A spokesperson for TikTok declined to comment.

In reply to a detailed list of questions, Osborne said: "Bad ads cost Facebook money and create experiences people don't want. Some of the things raised in this piece are either misconstrued or missing important context. We have every incentive — financial and otherwise — to prevent abuse and make the ads experience on Facebook a positive one. To suggest otherwise fundamentally misunderstands our business model and mission.”

While it did provide additional information on background, Facebook was unwilling to answer on record many of the questions raised by BuzzFeed News' reporting.

Osborne said internal company data shows that low-quality and scam ads prevent people from wanting to engage with genuine advertisers, which represents a real risk to Facebook’s business and mission.

However, one person with direct knowledge of Facebook ads enforcement said the company is primarily focused on growing revenue, and user safety comes second.

“[Facebook] will take any cent that they can get on anything,” they said. “They do not care whatsoever as long as they’re making a dime out of it.”

An ad behemoth

In its early days, monetization at Facebook was largely an afterthought. Founder and CEO Mark Zuckerberg was focused on growing usage of the platform. It wasn’t until two years after its 2004 founding that the company began building out its advertising business. Zuckerberg accelerated that push into ads by hiring Sheryl Sandberg, then Google’s vice president for global online sales, as COO, in 2008.

In the 12 years since, Facebook has grown from an upstart with less than $300 million in annual sales to a voracious ad behemoth with 10 million monthly active advertisers. Indeed, its core business function is to sell ads. And while it has become the model for online advertising — mining every detail from the profiles and human connections of its users to deliver targeted ads — it has also fostered an explosion in scams and exploitation of the billions of people who use Facebook and Instagram.

Sources say the company’s ad machine leaves Facebook’s users at the mercy of a system that abets cybercriminals, while monetizing hate and failing to stop huge numbers of ads that violate the company’s own policies. Facebook’s powerful ad tools and history of lax enforcement helped it become the preferred platform of shady affiliate marketers and drop shippers that target people with financial scams, trick them into expensive subscriptions, or use false claims and trademark infringement to entice them into overpaying for products that never arrive or are far from what was promised.

In 2018, Bloomberg Businessweek reported that Facebook had a prominent presence at a major affiliate marketing conference. One attendee described Facebook’s renowned targeting capabilities this way: “They go out and find the morons for me.”

The social giant recently began to attack in earnest the ad fraud and deception that has flourished on its platform for roughly a decade. To better vet new advertisers, it capped ad spending for new accounts in countries like China where scam ads proliferate, and last year it began aggressively filing lawsuits against people and companies who compromise ad accounts to run deceptive ads, among other schemes. The company said it has more than 35,000 people working on trust and safety, though it declined to break out the number focused on ad integrity work.

Yet a key issue, according to current and former employees, as well as industry experts, is that Facebook typically keeps the money it earns from scam ads and fraudulent campaigns that pollute its platforms, unless it involves credit card fraud. It earned more than $50 million in revenue over two years from a single shady San Diego marketing agency that ripped off Facebook users by tricking them into hard-to-cancel subscriptions and investment scams, and it banked almost $10 million in advertising revenue from the Epoch Times, a pro-Trump media organization that spreads conspiracies, before banning the outlet’s ads for using fake accounts and other deceptive tactics. Last month, the Financial Times reported that Facebook allowed more than 2,000 illegal ads to run in the United Kingdom and only removed them after being alerted by the country’s Advertising Standards Authority.

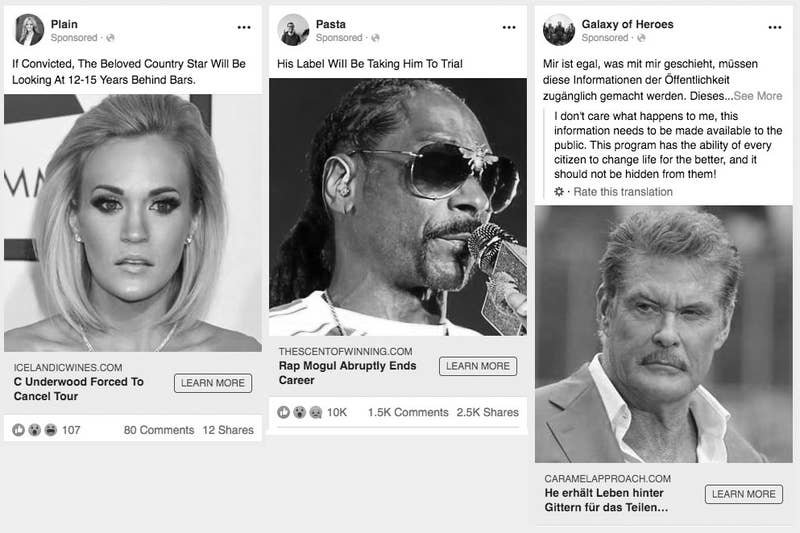

Various celebrity scam ads seen on Facebook.

This year it took money for ads promoting extremist-led civil war in the US, fake coronavirus medication, anti-vaccine messages, and a page that preached the racist idea of a genocide against white people, to name a few examples. On those rare occasions when Facebook does refund significant ad money, it’s typically because it has admitted to overcharging advertisers due to bugs in its measurement systems.

Osborne, the Facebook spokesperson, said the company does not profit from bad ads but declined to comment on the record about the money it earns from scam ads and fraudulent campaigns.

Facebook’s complacency in enforcing its policies to protect users from fraud and exploitation makes it an easy mark for scammers — and raises questions about the company's commitment to forgoing the type of revenue these shady ads generate.

“I think the profit motive definitely makes it harder for them to take real steps here,” said Tim Hwang, the author of Subprime Attention Crisis: Advertising and the Time Bomb at the Heart of the Internet. “It’s also compounded by the fact that what counts as 'bad' revenue they shouldn't profit from is potentially quite a broad category. They don’t want to start down this path if they can help it.”

“Facebook gets paid”

Interviews with current and former Facebook employees and company documents seen by BuzzFeed News paint a picture of an ad business built with the same lax controls and outsourcing of critical moderation work that has caused Facebook to become a fount of disinformation, foreign influence operations, hate speech, and harassment.

As with its content moderation efforts, Facebook uses a mix of software and human reviewers to police its platform for bad ads, scams, and fraud. And, as with its content moderation efforts, it has assigned this critical work to teams of third-party contractors. Paid $18 an hour and lacking the benefits given to Facebook employees, these workers are charged with the daily upkeep and monitoring of the company’s ads system.

“Despite all the claims around artificial intelligence, Facebook’s main solution to the [ad moderation] problem is to outsource it to someone who might actually not have a whole lot of experience,” Hwang said.

“There’s a lot of stuff that just doesn’t sit well with me,” said one contractor who works in the Austin office where one of Facebook’s ads teams is based. This person, who is contracted through third-party firm Accenture, said they were frustrated that their attempts to escalate fraud issues to Facebook employees often result in little or no follow-up.

“It’s really sad because in a lot of these scams people are getting fucked. Their livelihoods are affected,” said the worker, who asked not to be named because their contract prohibits them from speaking to the media.

Some people who use Facebook have been financially devastated by romance and investment scams, as well as by fake products peddled on Facebook Marketplace. A woman in Sweden who spoke with BuzzFeed News earlier this month lost her home and life savings after responding to a cryptocurrency investment ad she saw on Facebook.

Meanwhile, contractors on some teams have been told to ignore larger patterns of fraud and hacked accounts — unless they cost Facebook money. “When I can see an account has been hacked and I’m told to look the other way, it’s really shocking to me,” said a source with knowledge of ads enforcement.

Internal messages seen by BuzzFeed News show an Accenture manager who led a team of 45 ads analysts instructing contract workers to ignore hacked accounts and other violations as long as “Facebook gets paid” for ads through a valid payment method. Even if a hacker has taken over a person’s account, contractors were told to allow the hacker to place ads, as long the payment method for ads was valid.

“If they are spending their own money and not adding any foreign cards that don’t belong to them, then Facebook doesn’t experience any leakage,” the manager wrote in July of last year. Leakage refers to credit card chargebacks or other scenarios that result in Facebook having to refund the money.

The Accenture manager justified his guidance to ignore potentially hacked accounts and pages by diminishing the risk involved. He said hackers compromise accounts that control large pages because they’re “interested in the broad reach these larger pages have and simply want to reach larger audiences with legitimate ads.”

He framed it as a win-win scenario for Facebook and people exploiting its advertising system because Facebook earns revenue and the hacker can reach more people with their ad. “Facebook gets paid, the bad actors get to run their ads to a larger market,” the manager wrote. He joined Facebook as a full-time risk investigations analyst in April of this year, according to his LinkedIn profile.

Laura Edelson, a researcher with the NYU Online Political Ads Transparency Project, called the directives “quite shocking.”

“What Facebook is concerned about in that guidance is their own credit card charges. They're not necessarily concerned about if users are deceived by advertisers,” Edelson said. “We're talking about advertisers who are hacking someone else's account to run Facebook ads. So these are not necessarily advertisers who are honest actors.”

Osborne, the Facebook spokesperson, said the contractors who were instructed to focus on financial losses to Facebook are part of a team dedicated to preventing financial abuse. He said other teams are responsible for investigating hacked accounts, and that while the teams collaborate, they have separate duties.

Hwang said Facebook has put contractors in a hybrid sales and security role where they have to think about revenue, instead of allowing them to focus solely on risk.

“Facebook has created a position where they're responsible for both of these things,” he said. “It's clear through their policy which one Facebook is prioritizing.”

That tension was highlighted in advance of Election Day in the US. In the weeks leading up to voting day, Facebook moved some of its ad monitors off their usual tasks to focus on helping political advertisers buy as much inventory as they wanted before its ban on new ads took effect a week before Nov. 3. They were also told to restore disabled political ad accounts whenever possible. One document seen by BuzzFeed News told contractors that approving requests from election advertisers asking to increase the limits on their Facebook ad spends was their “highest priority.”

“My takeaway is we wanted to allow political advertisers to spend as much as possible, as quickly as possible, and deal with the policy questions later,” said the person with insight into Facebook ads enforcement.

Osborne said the focus on raising daily spend limits ensured that political advertisers could spend what they needed to get their message out before bans went into effect, and were unrelated to Facebook’s revenue goals.

Facebook’s prioritization of raising spend limits for the election follows previous instruction given to contractors with Accenture and its BCforward subsidiary last July, which emphasized revenue at the expense of user security. The same Accenture manager who gave guidance to focus on whether Facebook will lose money told workers to be more lenient with accounts originating in Russia, Ukraine, and other countries that are known to be centers of hacking, credit card fraud, and cybercrime.

“We have switched back to a model whereby only those accounts that would result in financial losses for Facebook are going to be shut down from RU/UA/surrounding countries,” the manager wrote.

“What this is saying — very clearly, they're not couching it here — is that people who are doing this fraud protection [for Facebook], their only job is to protect Facebook from fraud, not to protect users from fraud,” Edelson said.

Another former Facebook manager who worked with ads products said that directing contractors to ignore compromised or suspicious accounts flies in the face of an oft-cited company tenet: “Nothing at Facebook is somebody else’s problem.”

“The problem is that Facebook’s incentive structure is that they have no incentive to be good actors,” said the former manager, who asked not to be named due to fear of retaliation. “Lots and lots of things slip through the cracks. Their ads fact-checking and policing are not staffed where they need to be.”

That’s left holes in ad enforcement for bad paid content that isn’t caught by Facebook’s automated systems. In August, some Facebook employees became concerned when they noticed ads from a Chinese wholesaler promoting rings with the Nazi SS symbol.

“I reported this ad but was told it doesn’t violate our policies,” a Facebook marketing manager wrote to an internal group for ads feedback. “However, we did pull down a Trump campaign ad for using a symbol associated with the Nazis, so did that establish a precedent?”

One product manager responded and suggested that that ad slipped through because the company had other engineering priorities.

“We do not have [machine learning] models for low prevalence policy violations such as hate orgs,” the product manager wrote.

“You’re fighting a Hydra”

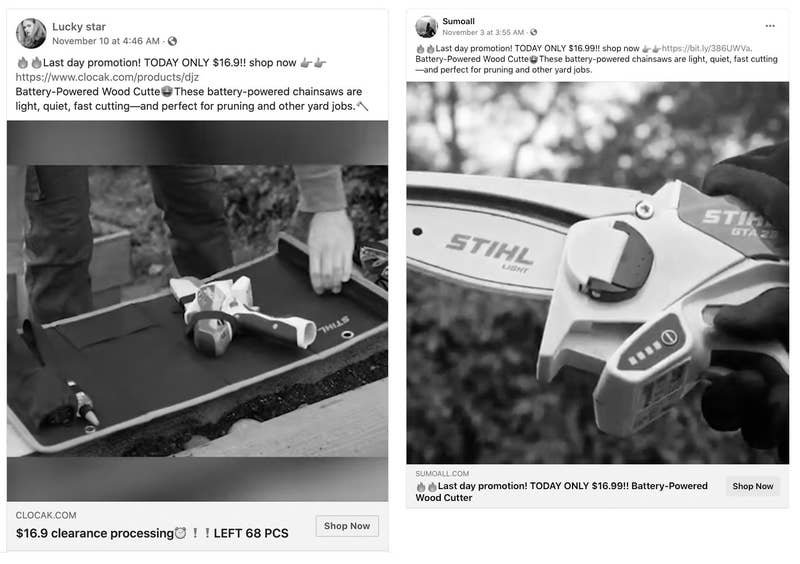

In July, employees at Stihl, the 94-year-old maker of chainsaws and other outdoor equipment, began receiving angry emails from people who said they’d ordered one of its products on Facebook. Instead of receiving the $200 GTA 26 garden pruner chainsaw they saw in slick video ads on the social network, customers were sent a single metal chain, an unrelated product, or nothing at all.

Stihl employees were puzzled. The company doesn’t sell products directly to consumers on Facebook; its equipment is only available from authorized dealers. Yet its new pruner — which quickly sold out earlier in the year — was being marketed all over Facebook for roughly a tenth of the actual price. The bogus ads were appearing on so many pages that Stihl’s employees struggled to locate and report them all.

“It’s frustrating for our customers. It's frustrating for our dealers. It's frustrating for the brand and our reputation,” Michael Camp, a Stihl product information specialist, told BuzzFeed News. “Customers are trying to ask us for answers, and unfortunately, it's absolutely out of our hands.”

Stihl staff have spent months fielding customer complaints without much help from Facebook.

Ads for Stihl products seen on Facebook.

“They’re more reactive than they are actively monitoring,” Camp said of Facebook, adding that it usually takes one or two business days for the social giant to remove ads flagged to it. “For a brand owner, you basically feel like you're fighting a Hydra where you cut off one and then two more follow.”

As of the publication of this story, BuzzFeed News had identified roughly a dozen ads on Facebook that falsely claimed to sell the Stihl pruner. Email addresses, company information, and other details listed on the related online stores show that half of them, including an ad that attracted close to half a million views, were linked to a Chinese company, Ouyi Electronic Commerce Co., Ltd. of Shenzhen. The company did not respond to emailed requests for comment.

The Chinese government banned Facebook from operating in the country in 2009. But the company has still managed to build a multibillion-dollar business selling ad space to China-based companies trying to reach international customers. A study by Pivotal Research Group estimates that Facebook, which partners with several agencies in mainland China, brought in about $5 billion from Chinese advertisers in 2018.

Company documents reviewed by BuzzFeed News reveal that Facebook has been aware of an epidemic of violative ads and scammers operating out of China for years. Yet the company continues to undertake major initiatives to increase its revenue in the country.

Earlier this year, Reuters reported Facebook was moving employees from Silicon Valley to Singapore to focus on growing Chinese revenue. Late last year, Facebook posted on Chinese messaging platform WeChat that it’s “committed to becoming the best marketing platform for Chinese companies going abroad.”

A former Facebook employee said the company knows that an increase in ads from China poses a risk to its global user base. A previous internal study of thousands of ads placed by Chinese clients found that nearly 30% violated at least one Facebook policy. This was uncovered as part of the regular ad measurement work performed by Facebook's policy team. The violations included selling products that were never delivered, financial scams, shoddy health products, and categories such as weapons, tobacco, and sexual sales, according to an internal report seen by BuzzFeed News.

The former employee said scammers in China watch for products and ads that perform well on Facebook, and then pile on with similar offers, as was the case with the monthslong onslaught of ads for the Stihl chainsaw.

Issues with China-based advertisers are well known among the social network’s employees and contractors, said the person with knowledge of Facebook ads enforcement. “We’re not told in the exact words, but [the idea is to] look the other way. It’s ‘Oh, that’s just China being China.’ It is what it is. We want China revenue,” they said.

“I would never ever buy anything on Facebook,” the source added.

Osborne said the company has a dedicated business integrity team in Asia and does not look the other way on scam ads or other policy violations with Chinese advertisers. He said Facebook invests in and builds tools for intellectual property and counterfeit detection.

One former employee said Chinese advertisers who were banned for violating the social network’s policies often return to the platform by setting up new companies and creating new Facebook ads accounts. That was the approach used by ZestAds, a Hong Kong–registered company based in Malaysia whose pages and accounts were banned by Facebook for deceptive e-commerce ads. Months later, it was back on the platform and placing misleading ads for face mask ads during the first wave of the COVID-19 pandemic this spring.

After years of letting these scams run wild, Facebook recently implemented measures intended to make it harder for unreliable advertisers to quickly scale up their operations. Last year it introduced a $450 daily spend limit for new ad accounts in Asia. When that limit is reached, the account is supposed to receive a manual review before its spend limit is increased. “There is still definitely room to scam but the sophistication it requires is more, and the [profits] are a lot lower,” the former employee said.

Some advertisers have been avoiding the limits by creating multiple companies and multiple ads accounts, or using rented Facebook accounts to buy additional ads, the former employee said. “You spread your risk, and if one is shut down, you still have many that are still running,” they said.

The practice is now so prevalent that earlier this year, Facebook sent a message to advertising partners in Asia asking that they declare all ad accounts under their control. “[Facebook] wants to try and find these accounts but it’s hard to really identify each one of them effectively,” said the former employee.

It’s harder still when such practices are not confined to a single country.

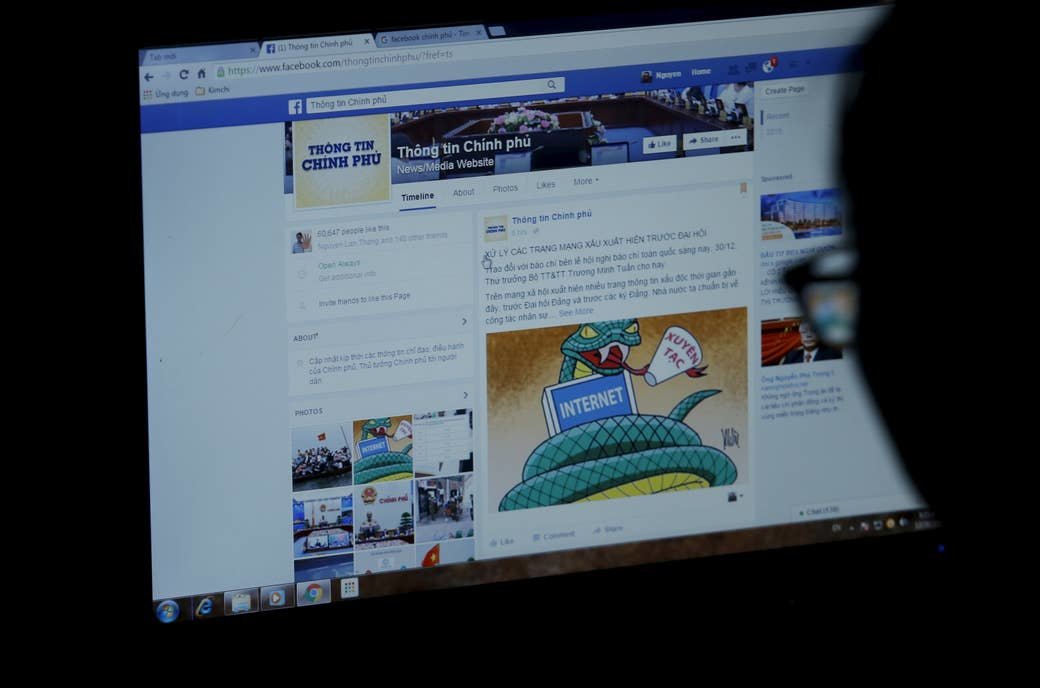

Vietnam

Vietnam has long been among the largest sources of fake ads and scams on Facebook. Many of these are purchased via hacked “business manager” accounts of marketing agencies and media buyers. Since these accounts are typically used to run campaigns for multiple clients, malicious hackers can use them to run large numbers of ads before being shut down. This practice has been going on for years.

In 2015, the SecDev Foundation, a think tank focused on security and international development, called account theft on the social network “an epidemic in Vietnam.

The problem has gotten so bad that Facebook created a dedicated “Vietnam” queue where contractors review ads and spend limit requests, and analyze payment methods for users from the country, according to information seen by BuzzFeed News.

The additional effort hasn’t deterred the hackers. Last October, a Facebook employee posted in an internal group to say that Vietnamese hackers had come up with a new way to taunt the company: After compromising an account, they would change its profile photo to the ISIS flag, causing the social network’s terrorist content moderation system to kick in and make it harder for the owner to regain access.

“Our lovely creative Hackers from VN are back but this time leaving a note for us on their Victim’s profile,” wrote the employee, who noted the hackers were “ramping up in [ad] spend.”

Facebook reportedly earned around $1 billion in revenue from Vietnam in 2018, though it’s unclear if that figure captured the amount of money spent by Vietnam-based people who buy ads using hacked accounts or stolen credit cards. The company declined to provide revenue figures for Vietnam or China.

Hai Hoang, a US resident born in Vietnam who ran a marketing agency that placed Facebook ads for clients in the country, told BuzzFeed News there is a black market for selling hacked ads accounts in the Southeast Asian country. Facebook banned Hoang’s company, Minerva Ads, this summer after it placed ads for clients selling Black Lives Matter and George Floyd apparel as well as pro-police and pro-Trump items.

Devon Kearns, a Facebook spokesperson, told BuzzFeed News in June that it removed Vietnamese-managed Pages associated with Hoang’s company because they were “deceiving people about their origin and purpose to drive them to websites selling merchandise.”

Hoang said he was never given a clear explanation for his ban, but noted it came after Minerva was named an official Facebook partner and spent roughly $5 million on ads.

He said Facebook’s recent crackdown on Vietnamese scammers often results in legitimate advertisers being banned, which leads them to pay hackers for access to compromised accounts that can run ads. His former clients chose to partner with agencies in China where Facebook’s oversight is more lax, according to Hoang.

“Facebook has a lot of [ad] resellers in China so a lot of my old clients went through China,” he said.

The Vietnam-based clients who were banned by Facebook in June are now running the same kind of ads without issue by purchasing them through Facebook-affiliated agencies in China, according to Hoang. “They didn’t get banned,” he said.

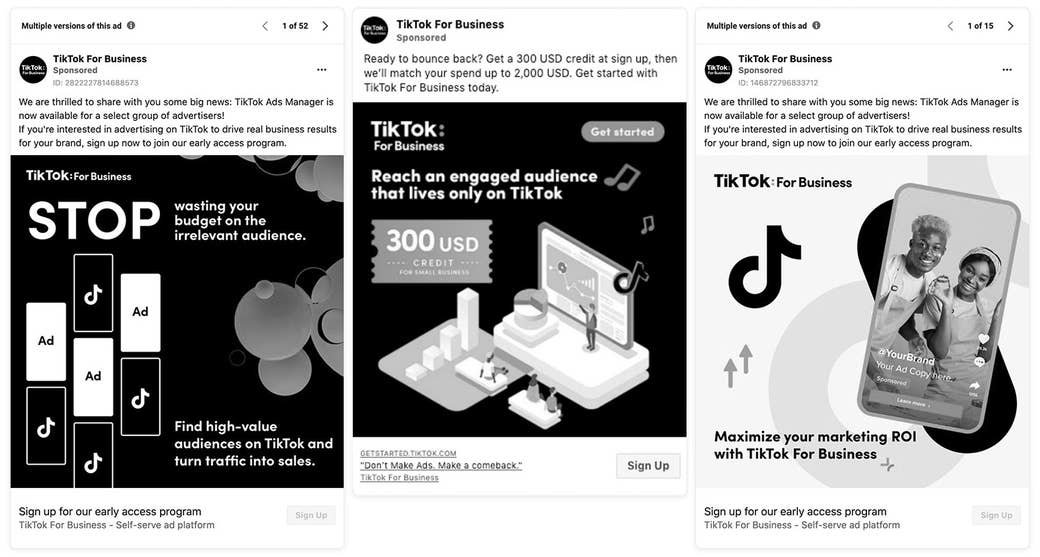

A different TikTok ad

Today, the video app’s Facebook ads are often aimed at convincing businesses and marketers to advertise on TikTok. The TikTok for Business Facebook page typically has hundreds of active ads and recently offered businesses a $300 ad credit to try its own platform.

Yet like so many other places on Facebook where money flowed, so did the scams. This fall, a flood of fake TikTok for Business pages appeared with ads on Facebook touting the same offers.

Niek van der Maas, a marketer in the Netherlands, fell for one. After clicking on the ad and installing what he thought was a TikTok-sanctioned application, his Facebook business manager account was hacked and used to spend more than 8,200 euros, or $9,950, on a Vietnamese Facebook advertisement for rolls of tape. The ad reached some 2.6 million people and generated over 2,100 responses, according to a blog post van der Maas wrote about the incident.

“This is a cautionary tale of how I lost close to €4,000 in a sophisticated Facebook scam,” wrote van der Maas, who noted that Facebook refunded the amount spent on fraudulent ads using his credit card. “I still find it hard to believe I fell for a scam like this.”

He declined to comment to BuzzFeed News. Facebook also declined to say what happened to the other amount — roughly 4,000 euros — the hacker spent buying ads on its platform.

In his post, van der Maas warned other advertisers of Facebook’s inability to police its platform.

“Good scams can fool even the Facebook ad review team,” he wrote.